When you imagine the future of technology, what do you see? Most of us imagine sleeker screens, faster processors, and smarter AI. But we rarely imagine the power cord required to make it all happen.

For decades, computing advanced faster than our need to think about its physical footprint. The digital world felt detached from the material one. “The cloud.” Clean. Invisible. Infinitely scalable.

But that illusion is breaking.

As we move from the era of information into the era of intelligence, computing is no longer light. It is industrial.

And for the first time, the limiting factor isn’t innovation.

It’s electricity.

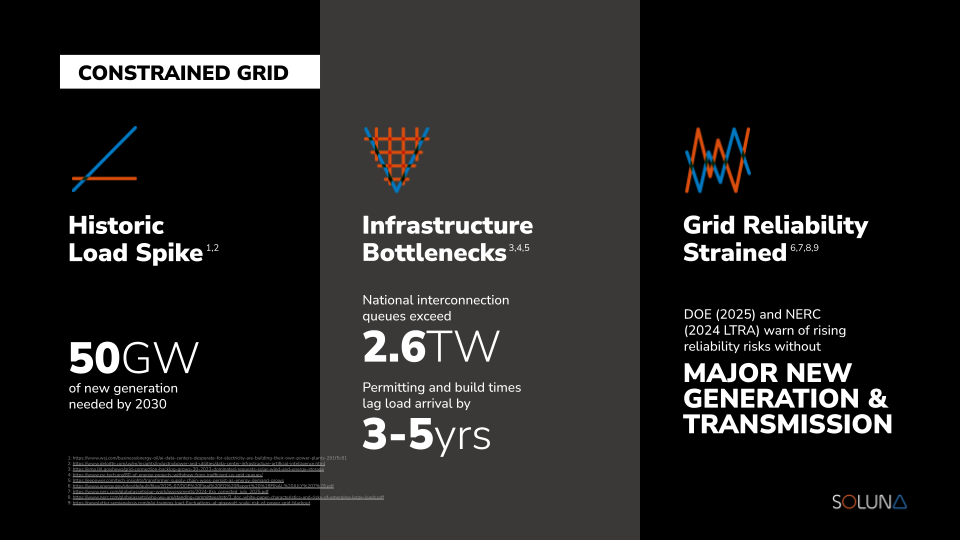

U.S. data center power demand is projected to more than double over the next decade, driven largely by AI workloads. At the same time, utilities across multiple regions are reporting multi-year interconnection queues for new large loads. The bottleneck is no longer chips. It is power delivery.

The story of modern computing is often told as a story of ideas, code, and speed. But underneath every breakthrough is a quieter story about infrastructure: who controls power, how it moves, and where its limits lie. That infrastructure story is now front and center.

To understand why the grid is straining today, let’s look at how we got here.

Tracking the Momentum: From Bytes to Brute Force

In the 1980s, the internet connected a small number of university systems. In the 1990s, the web commercialized it. Early workloads were light. Servers could run in office closets.

Then came hyperscale cloud.

Then mobile.

Then streaming.

Then high-performance computing.

Now we’ve entered the AI era.

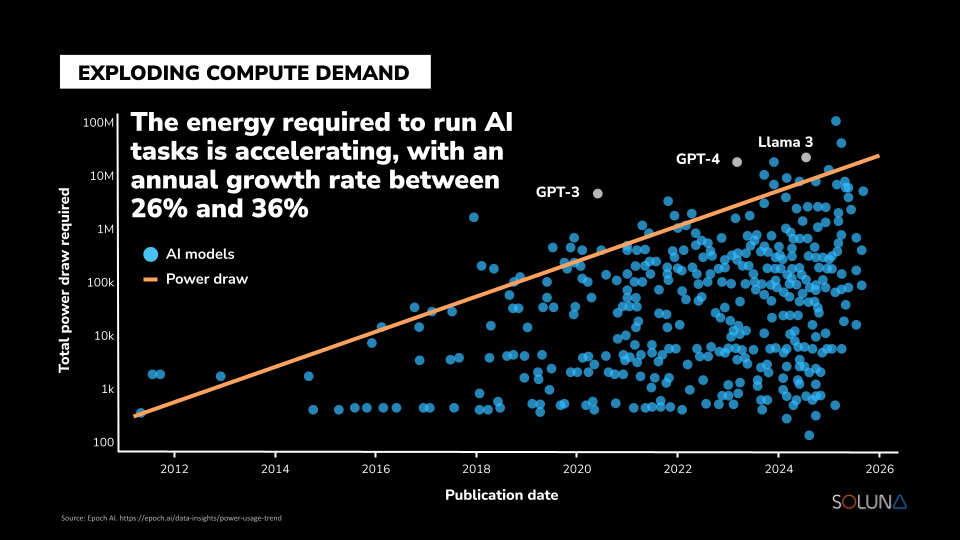

AI training and inference require dense, continuous, industrial-scale power. Unlike early internet applications, these workloads cannot be casually distributed across underutilized infrastructure. They demand dedicated capacity measured in megawatts — and increasingly, gigawatts.

We are asking a grid largely designed in the 20th century to support 21st-century compute intensity.

The strain is no longer theoretical.

It shows up in:

- Interconnection delays

- Capacity constraints

- Escalating energy procurement costs

- Multi-year development timelines for new data centers

For investors, this changes the equation. The next generation of digital infrastructure will not be constrained by software capability. It will be constrained by access to scalable, cost-effective energy.

The Infrastructure Wall

We have hit a wall. Not in software innovation, but in physical infrastructure.

The traditional grid model was designed for predictable, one-way energy flows: generation to transmission to load.

It was not designed for:

- Massive AI clusters

- Rapid load growth

- Concentrated compute campuses

- Flexible industrial demand

Utilities across the U.S. are now facing years-long backlogs for new large-load interconnections.

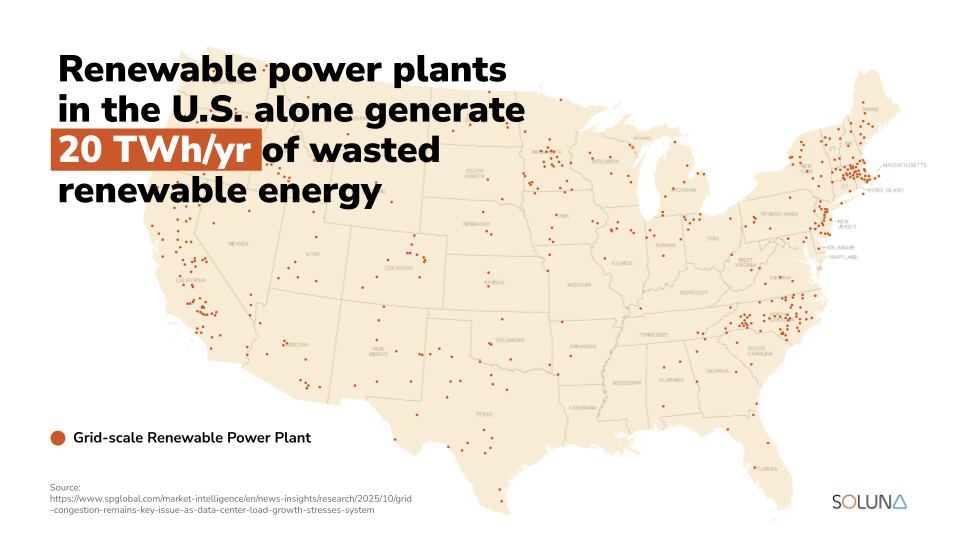

Meanwhile, renewable energy production continues to grow, but much of it is constrained by transmission limits.

In many regions, wind and solar farms generate power that the grid cannot absorb.

That energy is curtailed.

Priced to zero.

Or simply wasted.

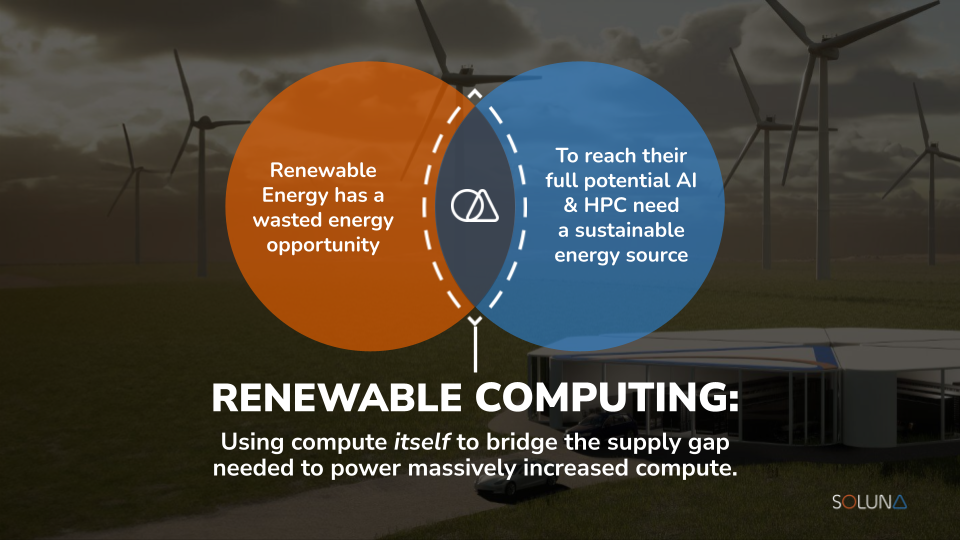

This is the paradox of the AI era: we do not lack generation capacity. We lack infrastructure alignment between renewable generation and compute demand.

Many developers attempt to bridge this gap by relying more heavily on fossil generation.

But this approach increases volatility, exposure to fuel price risk, and long-term carbon liabilities — factors that institutional capital increasingly evaluates.

Don’t Scale the Wall. Redesign the System.

Most AI infrastructure developers are attempting to scale within constrained transmission systems, competing for limited grid capacity.

Soluna chose a different path.

Instead of forcing AI into congested grid environments, we build compute where power already exists.

Rather than waiting in interconnection queues, we integrate directly with generation assets.

The solution is not to wait for grid expansion.

It is to redesign how computing interacts with energy infrastructure.

Renewable Computing: The Soluna Model

Soluna pioneered a model called Renewable Computing.

Here is how it works:

Behind-the-Meter Technology

Rather than relying on congested transmission systems, we co-locate our data centers at wind and solar farms.

This behind-the-meter integration enables:

- Direct access to generation

- Reduced transmission dependency

- Faster deployment timelines

- Improved economics for power producers and compute customers

Behind-the-meter integration reduces exposure to transmission bottlenecks and allows renewable projects to monetize energy that would otherwise be curtailed. For compute customers, this can translate into more predictable power pricing and accelerated time to energization.

Turning Curtailment into Compute

Wind and solar projects frequently produce energy that cannot be delivered to the grid.

Instead of wasting that power, we convert it into high-density computing, including Bitcoin mining and AI workloads.

Compute becomes:

- A flexible load

- A revenue enhancer for renewable generation

- A mechanism to stabilize project economics

This is not a theoretical construct. Soluna operates energized, renewable-powered compute capacity today, converting stranded energy into revenue-producing digital infrastructure.

Proven at Scale

A common misconception is that renewable energy cannot support industrial-scale computing.

Soluna has built and energized large-scale renewable-powered data center capacity, delivering high uptime and performance while aligning with long-term energy transition trends.

This is not a sustainability overlay.

It is infrastructure.

The Investment Implication

AI is not just a software revolution. It is a power allocation shift.

The companies that control scalable, low-cost energy access will define the next era of digital infrastructure.

In a constrained grid environment, energy access becomes a competitive advantage.

Renewable Computing is not a niche strategy.

It is a structural response to:

- AI-driven load growth

- Transmission bottlenecks

- Curtailment inefficiencies

- Capital market demand for energy-aligned infrastructure

Soluna is aligning high-performance computing with renewable generation, unlocking stranded power, and converting it into revenue-producing assets.

We are not climbing the AI energy wall.

We are building beyond it.

In the AI era, power is the new infrastructure moat.

Learn more about Soluna’s commitment to renewable computing → https://www.solunacomputing.com/