Radiators on chips ensure efficient heat transfer. This system is quiet, water-free, and has a high power density of over 60 kW per rack, optimizing performance.

Soluna builds the world’s most climate-friendly data centers, harnessing renewable energy to power cutting-edge AI innovation. It’s cleaner and more affordable.

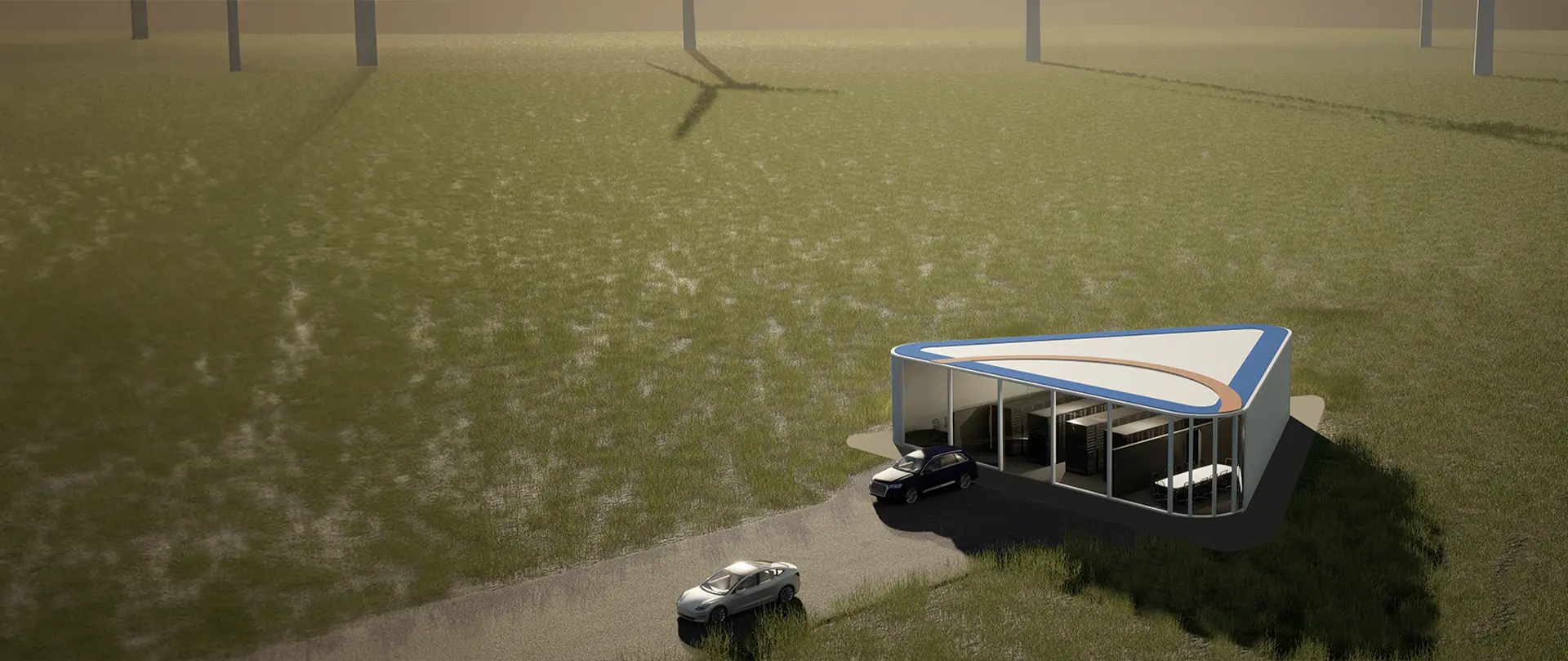

By co-locating with renewable power sources, our Data Centers are purpose-built to efficiently convert curtailed renewable energy into AI computing.

FINANCE

Fraud

Detection

HEALTHCARE

Drug

Discovery

RETAIL

Personalized

Shopping

TELECOMMUNICATIONS

AI Virtual

Assistants

MEDIA & ENTERTAINMENT

Video, Image &

Character Development

MANUFACTURING

Predictive

Maintenance

FEDERAL

Audit

Compliance

ENERGY

Grid Resilience &

Seismic Interpretation

Gain access to state-of-the-art AI.

In this panel discussion, CEO John Belizaire explores the interconnected roles of AI hardware, cloud infrastructure, and enterprise solutions in driving innovation and efficiency alongside industry leaders.

Green Datacenters Scalable to Your Needs

We offer a host of AI computing solutions to handle whatever you need to tackle.

18% cleaner than traditional data centers

We’re committed to making AI sustainable. That’s why our data centers are powered by the latest advancements in clean technology.

Direct Liquid Cooling

Green Power

Colocation with green power plants reduces the strain on saturated electrical grids, while consuming wasted green energy, leads to a carbon-negative impact.

Plug&Play

Electrical infrastructure capable of delivering over 250 kW per rack, with cooling and filtration systems designed to accommodate various types of computing systems including CPU, GPU, ASIC, and FPGA.

Scalable

Deployments ranging from 1 to 50,000 GPUs, supporting 1 to 250+ kW per rack, housed in modular buildings that combine to form large campuses.

Zero Water

Soluna’s modular data centers employ our proprietary hyper-efficient airflow system, complemented by closed loop chillers applied as necessary.

Aren’t you tired of being bogged down by infrastructure complexities when all you want to do is innovate with AI?

Soluna Cloud is purpose-built to host energy-intensive AI workloads with clean power, smart infrastructure, and no public cloud bloat.

Drowning in infrastructure complexity?

We specialize in turnkey hosting environments optimized for Generative AI—so your team can focus on building, not maintaining.

Scaling your AI models too costly, or too slow?

Our high-performance data centers are built for AI speed and efficiency, delivering the reliability and capacity you need to scale with confidence.

Worried about speed-to-market with your AI models?

In today’s fast-paced business environment, every minute counts. With Soluna Cloud, you can accelerate your AI initiatives. By eliminating the need to focus on managing the complexities of AI pipelines, or hardware infrastructure, we help you leap ahead in the AI race.

Get Started TodayArtificial Intelligence

Soluna Cloud makes your Generative AI development journey effortless by providing a trusted platform that is simple to use and lightning-fast.

Build On Trusted Foundations

We ensure reliability and stability for your AI projects. With robust infrastructure and industry-leading security measures in place, you can trust Soluna Cloud to support your development efforts with unwavering reliability.

Train, Tune, and Deploy Models Fast

Speed is critical in AI development. Our platform is optimized for lightning-fast foundation model training, tuning, and inferencing, enabling you to iterate quickly and efficiently. With Soluna Cloud, you can deploy models at scale without compromising performance or reliability.

Integrate Seamlessly

Seamless integration is key to a smooth AI development process. Whether you’re integrating with existing systems or building from scratch, our platform seamlessly integrates with your workflow, ensuring a frictionless experience from start to finish.

As seen in: